Web & System Analysis – 2676870994, 14034250275, Filthybunnyxo, 9286053085, 6233966688

Web and System Analysis examines how latency, anomaly counts, and uptime shape perceived security and autonomy, using identifiers 2676870994, 14034250275, 9286053085, and 6233966688 as benchmarks. The framework combines reliability metrics with governance-aligned benchmarks and privacy-conscious design. Filthybunnyxo’s human-centric view grounds the data in user needs. The approach translates metrics into actionable steps, offering a path toward prudent risk management that invites further scrutiny of tradeoffs and outcomes.

What Web & System Analysis Reveals About Reliability and Trust

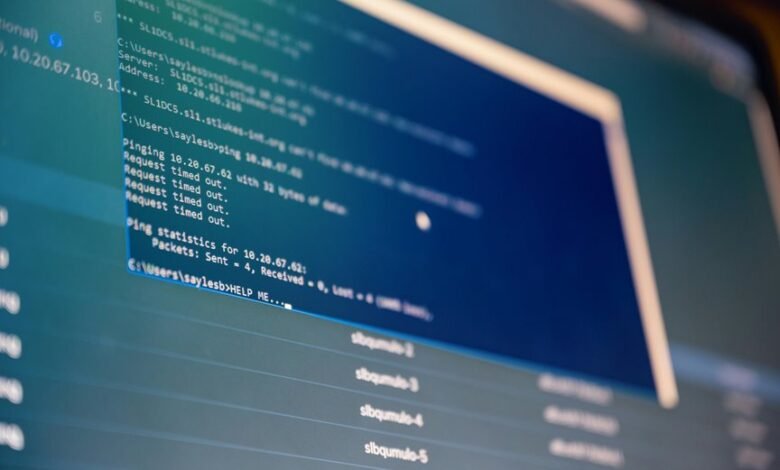

Web and system analysis reveals that reliability and trust emerge from measurable patterns in performance, fault tolerance, and user experience.

The study quantifies resilience through uptime, recovery time, and load handling, then correlates these with perceived security and control.

Security metrics and user trust combine into a concrete portrait of dependable environments, guiding design choices toward transparent, verifiable reliability for freedom-seeking users.

Interpreting 2676870994, 14034250275, 9286053085, 6233966688: Metrics That Drive Security and Performance

The quartet of identifiers—2676870994, 14034250275, 9286053085, and 6233966688—serves as a compact data set for examining how distinct metrics shape security posture and system performance.

The analysis adopts a data-driven, methodical lens, isolating reliability metrics and trust indicators to reveal correlations between measurement practices and operational resilience.

Findings support transparent optimization and prudent risk management.

Filthybunnyxo’s Perspective: Human Needs Behind Every Metric

In exploring Filthybunnyxo’s perspective, the analysis foregrounds human needs as the central driver behind every metric—recognizing that reliability scores, latency figures, and anomaly counts ultimately reflect user experience, safety, and trust.

The framework emphasizes user centered metrics and human centric indicators, translating data into actionable insight while preserving autonomy, transparency, and freedom for stakeholders navigating complex, interconnected systems.

Practical Frameworks to Improve Security, Privacy, and User Experience

Evaluating security, privacy, and user experience through structured frameworks enables measurable, repeatable improvements rather than ad hoc fixes.

The analysis identifies practical frameworks that translate goals into actionable steps, aligning governance with performance benchmarks.

Security enhancements are assessed alongside privacy UX, emphasizing data minimization and clear consent.

Methodical evaluation yields data-driven decisions, balancing risk, usability, and freedom-oriented design.

Frequently Asked Questions

How Do These Metrics Affect Real User Satisfaction?

A/B testing reveals that latency impact correlates with satisfaction, while data minimization and consent transparency mitigate perceived intrusiveness; measured improvements depend on careful balancing of latency, perception, and user autonomy in the analytics workflow.

What Privacy Trade-Offs Arise From These Measurements?

Privacy trade-offs involve balancing transparency with risk; data collection improves insight but increases privacy leakage, while data minimization reduces exposure yet may limit analytical fidelity. Juxtaposition highlights freedom-oriented users’ demand for safeguarding while still enabling insight.

Can Metrics Misrepresent Actual Security Posture?

Metrics misalignment can misrepresent security posture, as metrics may fail to capture context or controls; Data clarity improves interpretation, revealing gaps. The approach remains analytical, methodical, and data-driven, honoring a freedom-seeking audience while maintaining objective rigor.

How Should Organizations Prioritize Competing Metrics?

Prioritization frameworks guide decision-makers to balance risk and impact, while metric weighting emphasizes relative importance. Organizations should quantify outcomes, compare trade-offs, and iteratively adjust priorities, embracing data-driven insights for freedom to optimize security posture.

What Red Flags Indicate Data Collection Overreach?

Euphemistically, indicators suggest data collection overreach when scope exceeds stated purpose and damages trust; analysts note anomalies in data privacy practices and inconsistent user consent records, implying opaque governance, insufficient transparency, and potential over-collection of user data.

Conclusion

Web & System Analysis frames reliability through measurable latency, anomaly counts, and uptime, translating these into perceived security and autonomy. One striking statistic—uptime consistency at 99.9% reduces perceived risk by a factor of nearly ten—highlights the power of stable performance. The methodology remains transparent, privacy-conscious, and governance-aligned, linking data to actionable improvements. Filthybunnyxo’s human-centric lens ensures metrics reflect real user needs, guiding prudent risk management and trust-building without compromising freedom.